DP-600 Microsoft Implementing Analytics Solutions Using Microsoft Fabric Free Practice Exam Questions (2026 Updated)

Prepare effectively for your Microsoft DP-600 Implementing Analytics Solutions Using Microsoft Fabric certification with our extensive collection of free, high-quality practice questions. Each question is designed to mirror the actual exam format and objectives, complete with comprehensive answers and detailed explanations. Our materials are regularly updated for 2026, ensuring you have the most current resources to build confidence and succeed on your first attempt.

You have a Fabric workspace named Workspace1.

You need to create a semantic model named Model1 and publish Model1 to Workspace1. The solution must meet the following requirements:

Can revert to previous versions of Model1 as required.

Identifies differences between saved versions of Model1.

Uses Microsoft Power BI Desktop to publish to Workspace1.

Can edit item definition files by using Microsoft Visual Studio Code.

Which two actions should you perform? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

UESTION NO: 83 HOTSPOT

You have an Azure Data Lake Storage Gen2 account named storage! that contains a Parquet file named sales.parquet.

You have a Fabric tenant that contains a workspace named Workspace1.

Using a notebook in Workspace1, you need to load t he content of the file to the default lakehouse. The solution must ensure that the content will display automatically as a table named Sales in Lakehouse explorer.

How should you complete the code? To answer, select the appropriate options in the answer ar ea.

NOTE: Each correct selection is worth one point.

You have a Fabric workspace named Workspace1 that is assigned to a newly created Fabric capacity named Capacity1.

You create a semantic model named Model1 and deploy Model1 to Workspace1.

You need to publish changes to Model1 directly from Tabular Editor.

What should you do?

What should you use to implement calculation groups for the Research division semantic models?

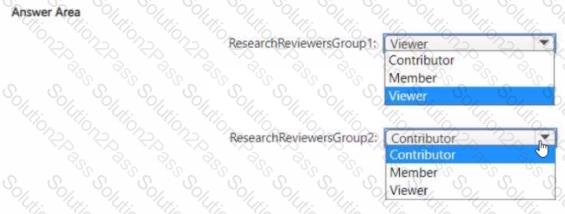

Which workspace rote assignments should you recommend for ResearchReviewersGroupl and ResearchReviewersGroupZ? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

You need to ensure that Contoso can use version control to meet the data analytics requirements and the general requirements. What should you do?

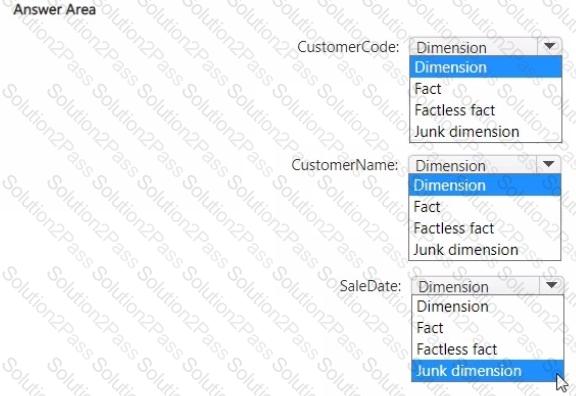

You have source data in a CSV file that has the following fields:

• SalesTra nsactionl D

• SaleDate

• CustomerCode

• CustomerName

• CustomerAddress

• ProductCode

• ProductName

• Quantity

• UnitPrice

You plan to implement a star schema for the tables in WH1. Thedimension tables in WH1 will implement Type 2 slowly changing dimension (SCD) logic.

You need to design the tables that will be used for sales transaction analysis and load the source data.

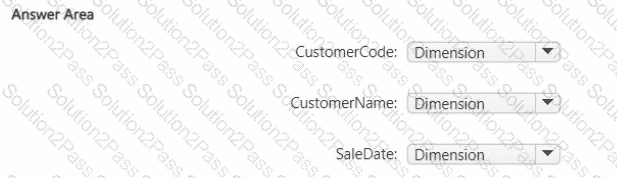

Which type of target table should you specify for the CustomerName, CustomerCode, and SaleDate fields? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

CC

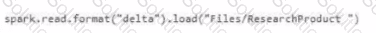

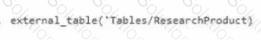

Which syntax should you use in a notebook to ac cess the Research division data for Productlinel?

A)

B)

C)

D)

Which type of data store should you recommend in the AnalyticsPOC workspace?

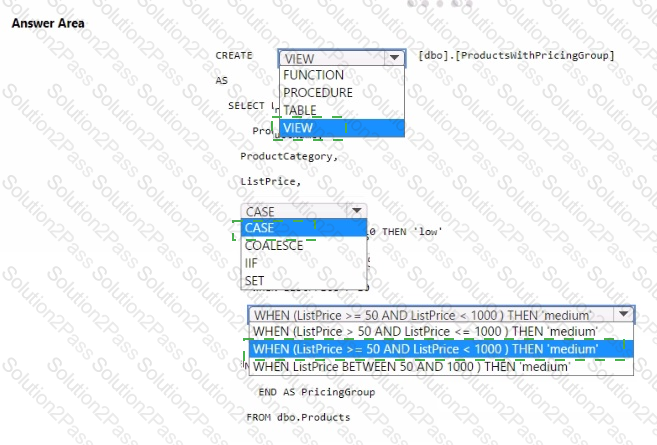

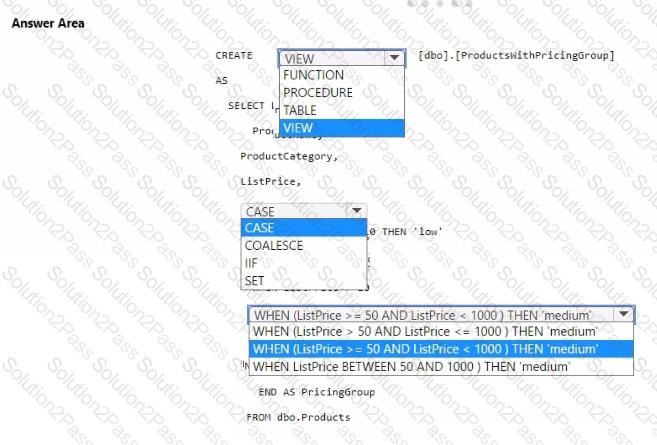

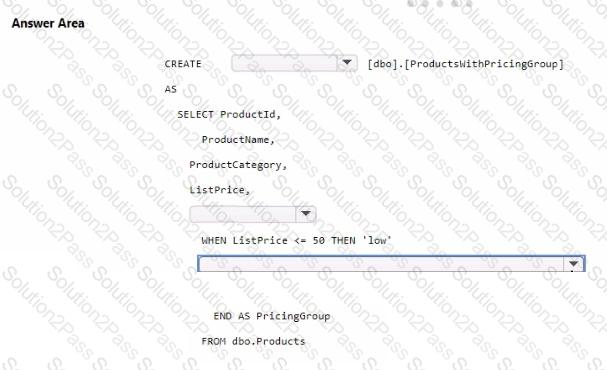

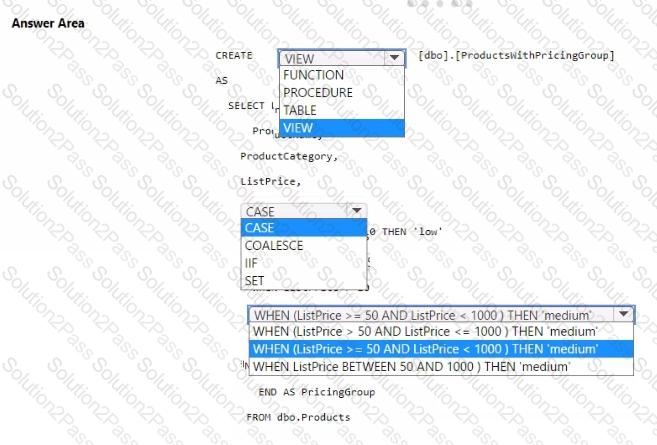

You need to resolve the issue with the pricing group classification.

How should you complete the T-SQL statement? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

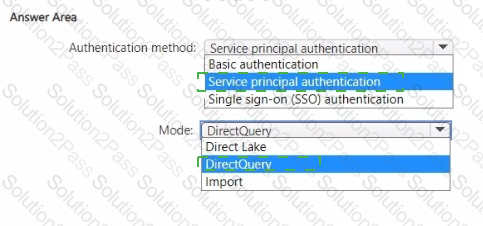

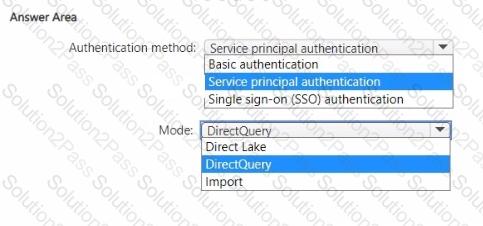

You need to design a semantic model for the customer satisfaction report.

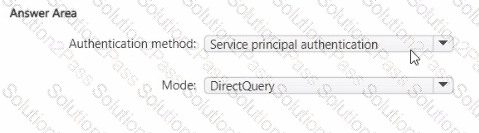

Which data source authentication method and mode should you use? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

You need to implement the date dimension in the data store. The solution must meet the technical requirements.

What are two ways to achieve the goal? Each correct answer presents a complete solution.

NOTE: Each correct selection is worth one point.

What should you recommend using to ingest the customer data into the data store in the AnatyticsPOC workspace?

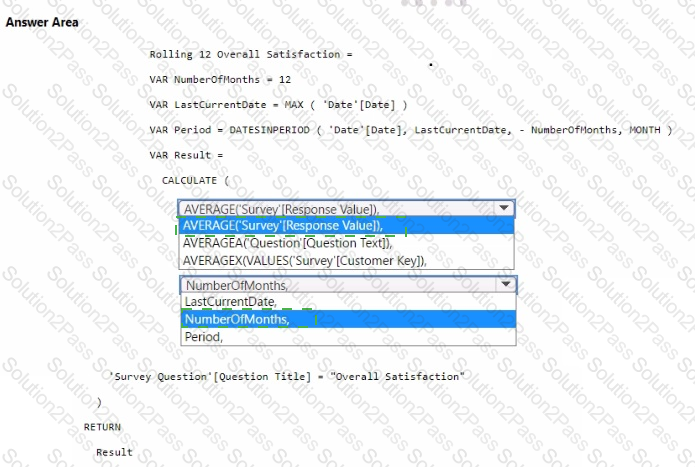

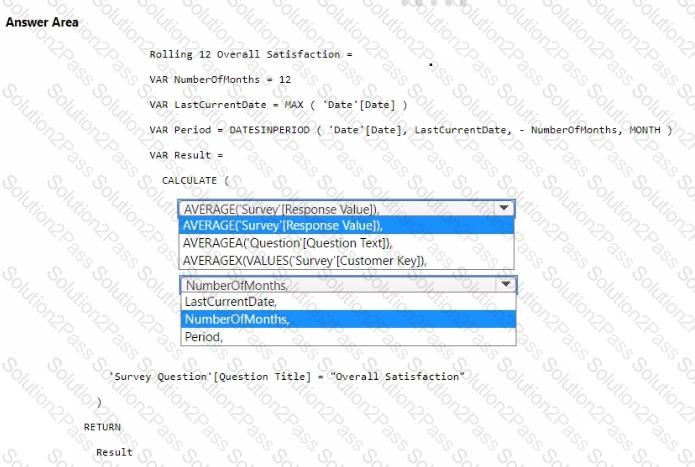

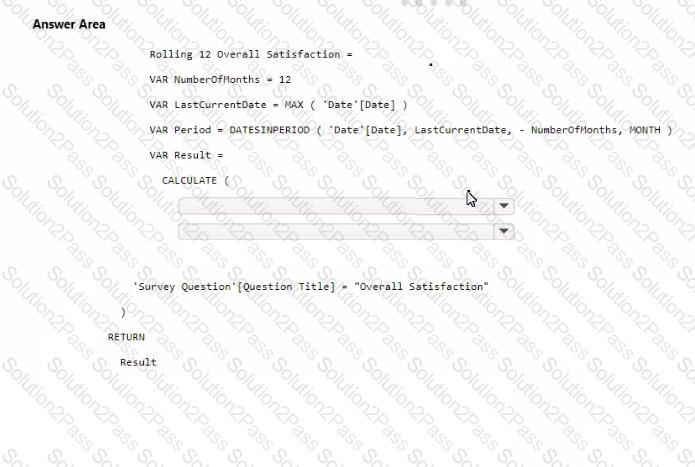

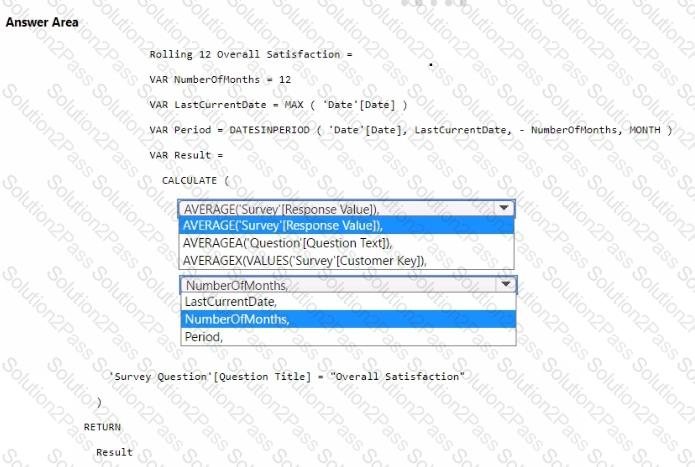

You need to create a DAX measure to calculate the average overall satisfaction score.

How should you complete the DAX code? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

You need to ensure the data loading activities in the AnalyticsPOC workspace are executed in the appropriate sequence. The solution must meet the technical requirements.

What should you do?

You ne ed to recommend a solution to prepare the tenant for the PoC.

Which two actions should you recommend performing from the Fabric Admin portal? Each correct answer presents part of the solution.

NOTE: Each correct answer is worth one point.

You have a Fabric warehouse named Warehousel that contains a table named Table! Tablel contains customer data.

You need to implement row-level security (RLS) for Tablel. The solution must ensure that users can see only their respective data.

Which two objects should you create? Each correct answer presents part of the solution.

NOTE: Each co rrect selection is worth one point.

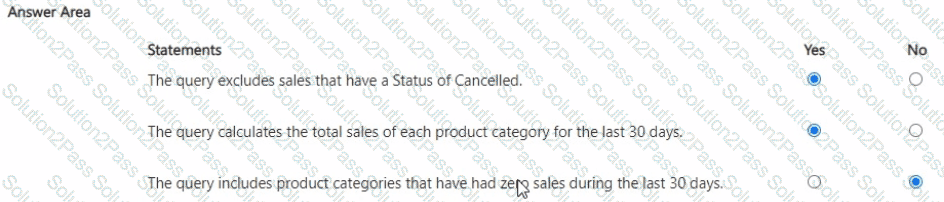

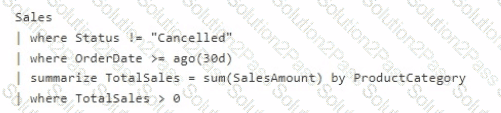

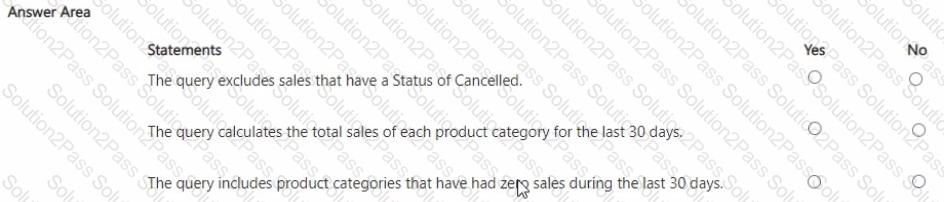

You have the following KQL query.

For each of the following statements, select Yes if the statement is true. Otherwise, select No. NOTE: Each correct selection is worth one point.

You need to create a data loading pattern for a Type 1 slowly changing dimension (SCD).

Which two actions should you include in the process? Each correct answer presents part of the solution.

NOTE: Each correct answer is worth one point.

You have a Fabric tenant that contains a lakehouse named lakehouse1. Lakehouse1 contains a table named Table1.

You are creating a new data pipeline.

You plan to copy external data to Table1. The schema of the external data changes regularly.

You need the copy operation to meet the following requirements:

• Replace Table1 with the schema of the external data.

• Replace all the data in Table1 with the rows in the external data.

You add a Copy data activity to the pipeline. What should you do for the Copy data activity?