DP-700 Microsoft Implementing Data Engineering Solutions Using Microsoft Fabric Free Practice Exam Questions (2026 Updated)

Prepare effectively for your Microsoft DP-700 Implementing Data Engineering Solutions Using Microsoft Fabric certification with our extensive collection of free, high-quality practice questions. Each question is designed to mirror the actual exam format and objectives, complete with comprehensive answers and detailed explanations. Our materials are regularly updated for 2026, ensuring you have the most current resources to build confidence and succeed on your first attempt.

You have a Fabric workspace named Workspace1 that contains the following items:

• A Microsoft Power Bl report named Report1

• A Power Bl dashboard named Dashboard1

• A semantic model named Modell

• A lakehouse name Lakehouse1

Your company requires that specific governance processes be implemented for the items. Which items can you endorse in Fabric?

You need to recommend a solution to resolve the MAR1 connectivity issues. The solution must minimize development effort. What should you recommend?

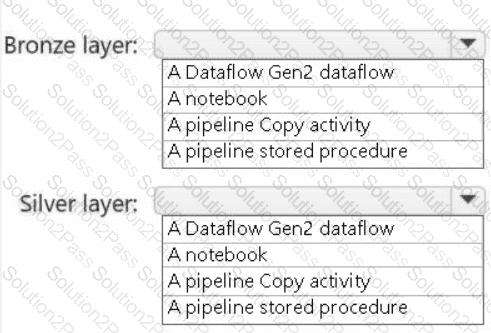

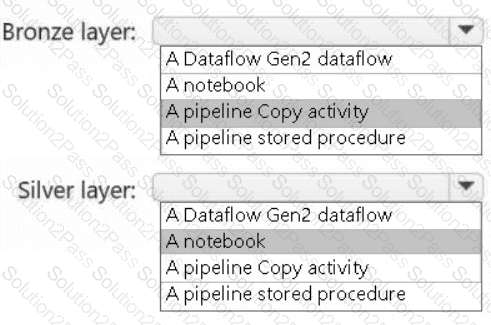

You need to recommend a method to populate the POS1 data to the lakehouse medallion layers.

What should you recommend for each layer? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

HOTSPOT

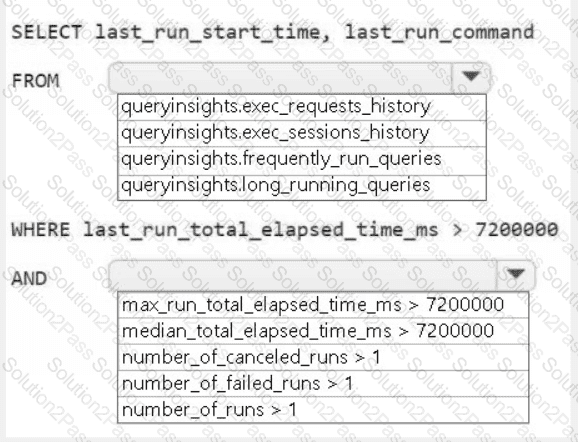

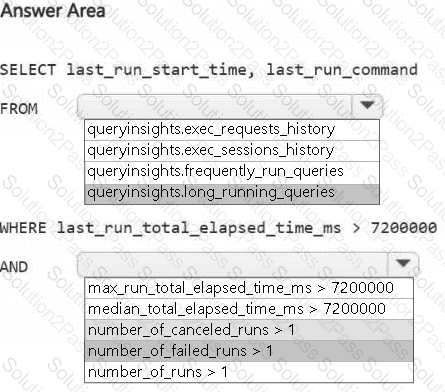

You need to troubleshoot the ad-hoc query issue.

How should you complete the statement? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

You need to create a workflow for the new book cover images.

Which two components should you include in the workflow? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

What should you do to optimize the query experience for the business users?

You need to ensure that processes for the bronze and silver layers run in isolation How should you configure the Apache Spark settings?

You need to resolve the sales data issue. The solution must minimize the amount of data transferred.

What should you do?

You need to implement the solution for the book reviews.

Which should you do?

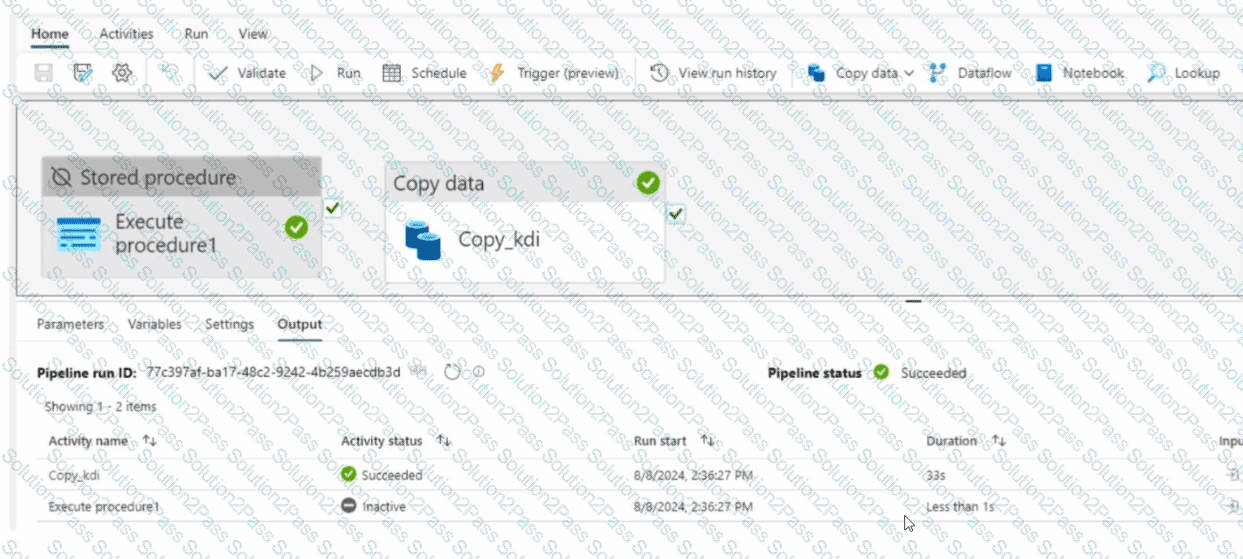

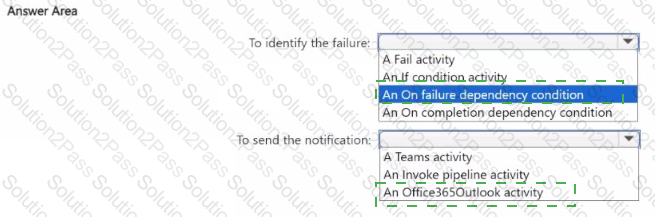

You have a Fabric workspace that contains a data pipeline named Pipeline! as shown in the exhibit.

(Click the Exhibit tab.) What will occur the next time Pipelinel tuns?

(Click the Exhibit tab.) What will occur the next time Pipelinel tuns?

You have a Fabric workspace named Workspace1 that contains a notebook named Notebook1.

In Workspace1, you create a new notebook named Notebook2.

You need to ensure that you can attach Notebook2 to the same Apache Spark session as Notebook1.

What should you do?

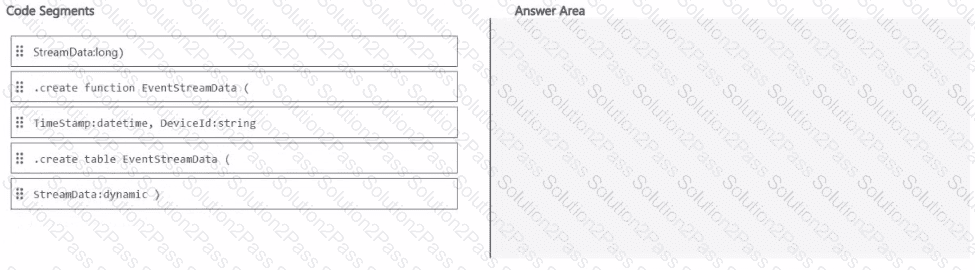

You have a Fabric workspace that contains an eventhouse named Eventhouse1.

In Eventhouse1, you plan to create a table named DeviceStreamData in a KQL database. The table will contain data based on the following sample.

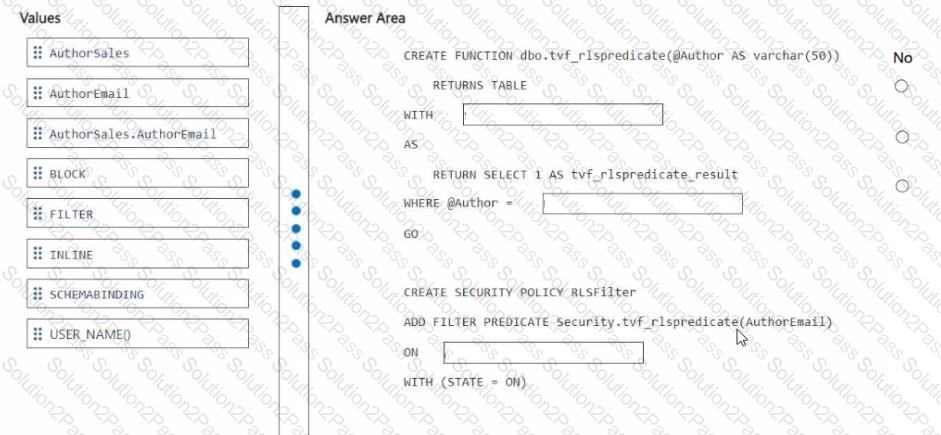

You need to ensure that the authors can see only their respective sales data.

How should you complete the statement? To answer, drag the appropriate values the correct targets. Each value may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content

NOTE: Each correct selection is worth one point.

You have a Fabric workspace that contains a warehouse named Warehouse1. Data is loaded daily into Warehouse1 by using data pipelines and stored procedures.

You discover that the daily data load takes longer than expected.

You need to monitor Warehouse1 to identify the names of users that are actively running queries.

Which view should you use?

You have a Fabric workspace that contains an eventstream named EventStreaml. EventStreaml outputs events to a table named Tablel in a lakehouse. The streaming data is souiced from motorway sensors and represents the speed of cars.

You need to add a transformation to EventStream1 to average the car speeds. The speeds must be grouped by non-overlapping and contiguous time intervals of one minute. Each event must belong to exactly one window.

Which windowing function should you use?

You have a Fabric warehouse named DW1. DW1 contains a table that stores sales data and is used by multiple sales representatives.

You plan to implement row-level security (RLS).

You need to ensure that the sales representatives can see only their respective data.

Which warehouse object do you require to implement RLS?

You have a Fabric workspace that contains a lakehouse named Lakehouse1.

In an external data source, you have data files that are 500 GB each. A new file is added every day.

You need to ingest the data into Lakehouse1 without applying any transformations. The solution must meet the following requirements

Trigger the process when a new file is added.

Provide the highest throughput.

Which type of item should you use to ingest the data?

HOTSPOT

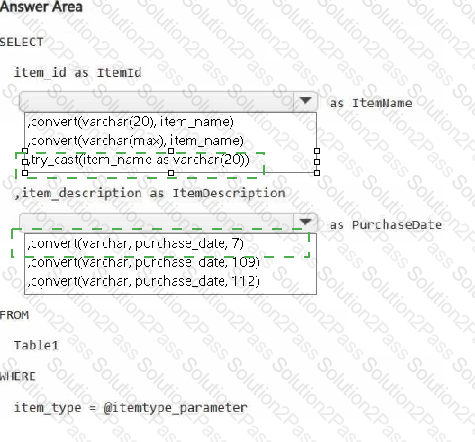

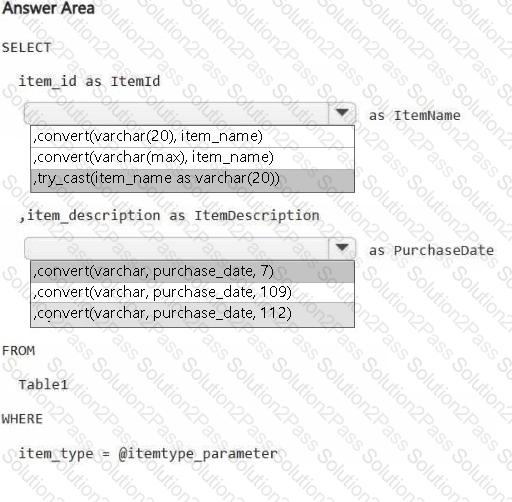

You have a Fabric workspace.

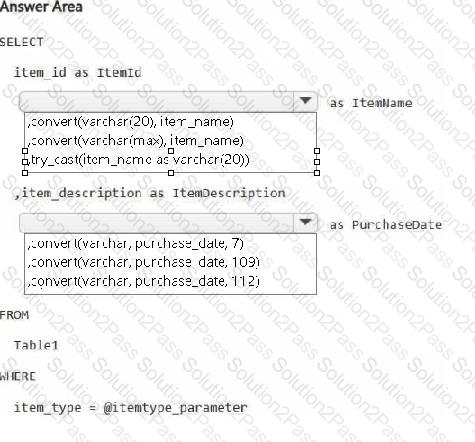

You are debugging a statement and discover the following issues:

Sometimes, the statement fails to return all the expected rows.

The PurchaseDate output column is NOT in the expected format of mmm dd, yy.

You need to resolve the issues. The solution must ensure that the data types of the results are retained. The results can contain blank cells.

How should you complete the statement? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

You need to populate the MAR1 data in the bronze layer.

Which two types of activities should you include in the pipeline? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

You have an Azure event hub. Each event contains the following fields:

BikepointID

Street

Neighbourhood

Latitude

Longitude

No_Bikes

No_Empty_Docks

You need to ingest the events. The solution must only retain events that have a Neighbourhood value of Chelsea, and then store the retained events in a Fabric lakehouse.

What should you use?

A screenshot of a computer Description automatically generated

A screenshot of a computer Description automatically generated

A screenshot of a computer Description automatically generated

A screenshot of a computer Description automatically generated

C:\Users\Waqas Shahid\Desktop\Mudassir\Untitled.jpg

C:\Users\Waqas Shahid\Desktop\Mudassir\Untitled.jpg